Legal claims of disparate impact discrimination go something like this: A company uses some system (e.g., hiring test, performance review, risk assessment tool) in a way that impacts people. Somebody sues, arguing that it has a disproportionate adverse effect on racial minorities, showing initial evidence of disparate impact. The company, in turn, defends itself by arguing that the disparate impact is justified: their system sorts people by characteristics that—though incidentally correlated with race—are relevant to its legitimate business purposes. Now, the person who brought the discrimination claim is tasked with coming up with an alternative—that is, a system with less disparate impact that still fulfills the company’s legitimate business interest. If the plaintiff finds such an alternative, it must be adopted. If they don’t, the courts have to, in theory, decide how to trade off between disparate impact and legitimate business purpose.

Much of the research in algorithmic fairness, a discipline concerned with the various discriminatory, unfair, and unjust impacts of algorithmic systems, has taken cues from this legal approach—hence, the deluge of parity-based “fairness” metrics mirroring disparate impact that have received encyclopedic treatment by computer scientists, statisticians, and the like in the past few years. Armed with intuitions closely linked with disparate impact litigation, scholars further formalized the tradeoffs between something like justice and something like business purpose—concepts that crystallized in the literature under the banners of “fairness” and “efficiency.”

But more recently—and perhaps in response to increased attention to the limitations of the disparate impact strategy—a second approach has been gaining traction. It is an arguably more straightforward way of looking at whether a system has wrongfully discriminated by asking the following question: Is race (or sex, gender, religion, and so on) inappropriately affecting the system’s decision in some way? If yes, then we have reason to believe the system is wrongfully discriminating. If no, then the differences in outcomes between racial groups might be explained by factors other than race, which may be “legitimately” used to distinguish among people. To answer this question, it’s thought, we have to get a causal picture of how decisions are made. We have to ask: what are the cause-effect relationships between relevant attributes that lead to a decision? More bluntly: does the attribute of race cause a particular decision outcome?

Causal reasoning responds directly to a variety of complaints that data-based machine learning methods work only on correlations and can never distinguish genuine causal relationships between attributes from spurious ones. In the realm of discrimination detection, correlations with race are problematic for two reasons. First, drawing on non-causal features that correlate with race can lead to outcomes that disparately impact one group in a discriminatory manner. (Redlining practices that used zipcodes to deny Black applicants financial services are a classic example.) Second, and crucially, disparate outcomes can themselves be thought of as correlations with race. And, the argument goes, if these correlations are also merely spurious, then an algorithmic procedure could seem wrongfully discriminatory but in fact be perfectly legitimate. Both of these reasons suggest that causal approaches may be better suited to the task of discrimination detection—and algorithmic fairness’s broader goal of assuring racially “fair” outcomes.

It is critical, then, that the actual methodology—which asserts what counts as a “causal” effect of race and what types of causal effects are permissible—be scrutinized. These choices make a claim on both what discrimination is and what standards we should expect from algorithmic systems that claim to be racially fair.

The “Direct Effect of Race” View of Discrimination

The audit study is the classic social-scientific experiment that claims to detect the effect of race. In these studies, two units are presented as identical in all respects, but each receives a randomized race treatment. These units undergo some experimental procedure, after which an outcome is measured. Any differences in the measurable outcome between differently raced units are thus attributed to race.

In Marianne Bertrand and Sendhil Mullainathan’s audit study, “Are Emily and Greg More Employable Than Lakisha and Jamal? A Field Experiment on Labor Market Discrimination,” fictitious résumés with randomized raced names are sent out to employers looking to hire. Since the résumés are identical except for the differently raced names of the applicants, the difference in callback rates between résumés with names raced white (the Emilys and Gregs) and résumés with names raced black (the Lakishas and Jamals) is interpreted as being due to the decision-makers’ perception of race.

We can tell this story in terms of causal inference. If a résumé reviewer’s callback decision is influenced, or caused by multiple factors—what’s on a candidate’s résumé as well as her race—then the audit study shows that differences in the outcomes across the group of units raced white and the group raced black can only be chalked up to the race “cause” and not the qualifications “cause.” Thus, the audit study claims, this experimentally measured effect of race gives us the “direct effect of race.”

The “direct effect” interpretation of discrimination is itself a point of dispute in the law. In his now classic book Causality, Judea Pearl cites the decision in Carson v. Bethlehem Steel Corp to support his causal theory of discrimination: “The central question in any employment-discrimination case is whether the employer would have taken the same action had the employee been of a different race… and everything else had been the same.”12 This particular framing of racial discrimination indeed appears counterfactual, but we may nevertheless ask whether the causal counterfactual understanding of discrimination is the right normative theory of discrimination. Does reasoning about how decisions may or may not have been “causally” affected by race lead to a robust method for detecting wrongful discrimination on the basis of race?

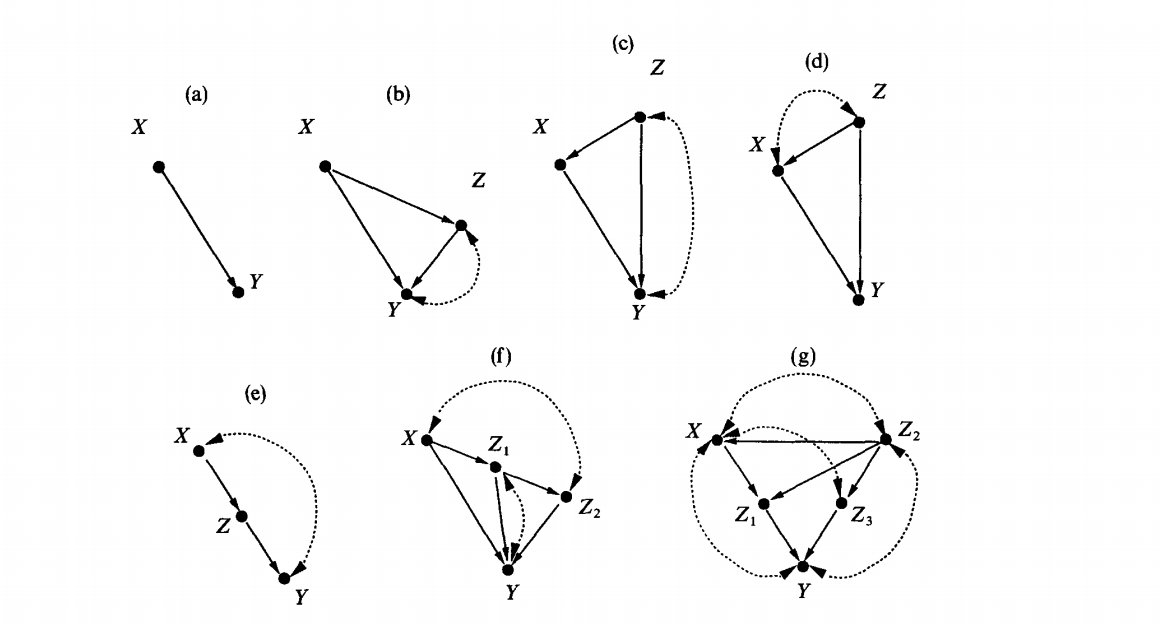

In “Eddie Murphy and the Dangers of Counterfactual Causal Thinking About Detecting Racial Discrimination,” legal scholar and sociologist Issa Kohler-Hausmann offers a conceptual critique of these strategies. In Kohler-Hausmann’s view, the audit study’s attempt to disclose a causal effect of race is incompatible with a social constructivist account of race as a social category. If, as the social constructivist account suggests, race is a social category constituted by a set of social practices, institutions, norms, expectations, and so on, then to speak of a decision as being “directly caused” by race white or race black is incoherent. In other words, a causal diagram that draws race as a singular node that independently causes downstream effects, or that sees race as something that can be isolated from confounders and mediators such as “socioeconomic status” or “high school performance” rests upon an incorrect theory of what race is—and why it matters in our society.

Kohler-Hausmann’s objection bears not only on the methodological validity of the audit study as measuring the causal effect of race. It also issues a serious challenge to any theory of discrimination based on the causal counterfactual model, dominant in explicit and implicit forms throughout the social sciences and the law. Without a robust constructivist theory of race, counterfactual methods rely on what we might call a phenotype-only view of race—a view that sees race as, say, melanin levels, or only as groups of people that tend to be named Jamal rather than Greg. This view, I argue, reduces wrongful discrimination to something like irrationality in decision-making. Consider the intuitive pull of the audit study: We feel revulsion at the different callback rates because we expect that insofar as Greg and Jamal have identical résumés, they are equally qualified for the job. Their names, on the other hand, do not speak to their qualifications. The divergent callback outcomes, then, suggest that decisions are made on grounds that do not have to do with the task at hand. Thus, if the category of race is just a surface-level trait, untethered to broader social facts, and Gregs are nevertheless consistently preferred over Jamals, then these asymmetries are evidence of irrationality on the part of the decision-maker. If the study’s nonzero average direct effect of race indicates discrimination, then the wrongful nature of the disparate outcomes can be understood to refer to the irrationality of a decision-maker’s response to that surface-level category.

But the discrimination-as-irrationality view surely can’t be right. After all, there is a reason why Bertrand and Mullainathan ran the study with the pairings Greg and Jamal, and Emily and Lakisha, and not, say, Katie and Claire. There might in fact be a nonzero direct effect of race between Katies and Claires—who knows! That’s a wholly unexplored space of potential discrimination. And if discrimination were really reducible to a notion of irrationality, the Katie and Claire audit study would perhaps be more interesting, since it would present us with a truly strange, seemingly sui generis phenomenon of irrationality. Only the social constructivist view of race can explain why audit studies typically “manipulate” units across categories like race, and not across categories like first letter of first name. And the reason, to be clear, is that the names Greg and Jamal correspond to the cultural and historical material that make up our notions of whiteness or Blackness.

But perhaps not all is lost with causal diagrams. If race is not understood as an essentially biological, phenotypic, or otherwise surface-level trait, and is instead understood in reference to the social meanings and practices accrued in a society of racial stratification, can causal diagrams nevertheless separate out and measure those effects of race as “counting” toward discrimination? Such a view would depart from the Direct Effect of Race view of discrimination and instead refer to the suite of social factors that constitute the groups white and Black.

A constructivist view of race pushes on the underlying intuition of equivalence on which the audit study relies. If we find that being structurally barred from certain educational, labor market, and health outcomes, or that being structurally less able to turn one’s individual qualities and interests into impressive résumé contents, or that being structurally less likely to receive a callback even when one’s résumé meets the average white standard, is constitutive of what it means to be raced Black in the U.S. labor market, then how does a study on white Greg and black Jamal— who, the study tells us, are different only in their names and not in any of the other features deeply enmeshed with race in the U.S.—actually show the “effect” of being raced white vs. raced Black?

If the audit study only works given a surface-level, phenotype-only understanding of race, is there an experimental setup that corresponds to a social constructivist understanding of race? Kohler-Hausmann proposes a way forward: “The ideal experiment to detect discrimination in the counterfactual causal model is one in which the researcher…. [zeroes] out the average differences in relevant variables that were produced by the real lived institutions of racial orders.”3

A new generation of causal counterfactual fairness methodologists have developed a method that attempts to answer that call. The second part of this post will address these new methods—and the persistent dangers in thinking counterfactually and causally about racial discrimination.

To read the second part of this series, click here.

- Pearl, Judea. Causality. Cambridge: Cambridge University Press, 2009, 131.

- It should be noted that this decision is not seen by legal scholars to be of major significance or influence in interpretations of anti-discrimination law. Nonetheless, counterfactual framings of discrimination are especially common as conservative readings of what equal protection requires. See: Students for Fair Admissions v. Harvard College and the ongoing issues surrounding Facebook’s ad targeting of user accounts by age, gender, zipcode, and so on.

- Kohler-Hausmann, Issa, Eddie Murphy and the Dangers of Counterfactual Causal Thinking About Detecting Racial Discrimination (January 1, 2019). 1212-1213, emphasis added.

Filed Under