Homepage

Issue 1

American Power

Narratives of US decline are coinciding with explosive expressions of US dominance. Are we witnessing the transition between hegemons? The official dawn of multipolarity? Or force and hubris continuous with the Cold War and the unipolar moment?

The first issue of Phenomenal World features thirteen essays and interviews on American power.

Selected Posts

Politics of the Price Level

Newsletters

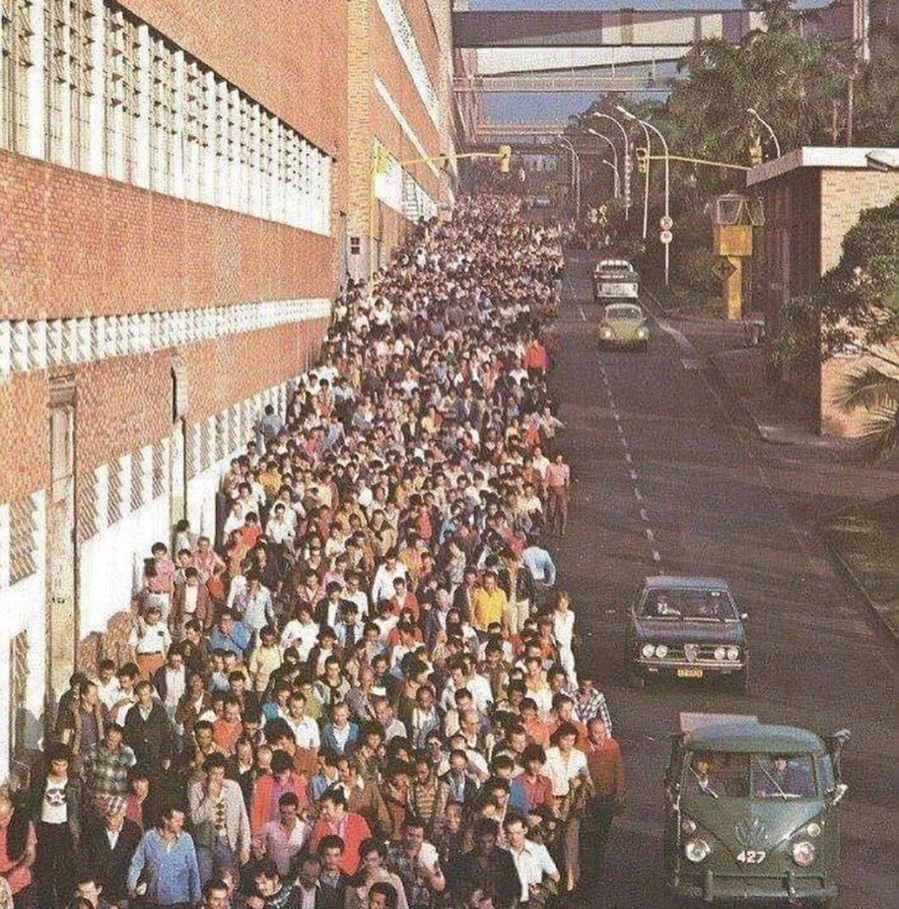

The largest private-sector employers in the United States today are a mix of retail and parcel companies that have all built out sophisticated logistical operations. In the post-war era, the largest employers were all in manufacturing, and warehousing and distribution were both seen merely as supporting long production runs. In 1962, management theorist Peter Drucker referred to distribution as “the economy’s dark continent.”

In a new monthly newsletter column, Benjamin Fong examines the employer behemoths of the twenty-first century—their business models, their management techniques, and the workers and worker organizing that populate their supply chains.

June 4, 2026

Analysis

Trucking’s Window of Opportunity

The coming restructuring of US motor carrier logistics

The Polycrisis is a monthly column on geopolitics and climate, by Tim Sahay and Kate Mackenzie. Follow The Polycrisis on Bluesky, LinkedIn, and Twitter, and on their website thepolycrisis.org where you can find the Polycrisis podcast, Electric World Order.

See All

Many of the processes that are reshaping the globe find stark expression in Latin America—the extraction of key minerals for green technologies, the transformation of vast tracts of land for monocrop agriculture, the ravages of climate catastrophe, the rise of the new right, and the dynamics of Great Power competition. Amidst the mixed legacies of twentieth-century global South development, the tensions and trends of the international political economy are now concentrated in the region.

In Meridional, a monthly newsletter column, Fernando Rugitsky takes his cues from Gramsci’s “meridional questions” to situate the latest developments in a planetary context.

May 15, 2026

Analysis

The Economic Consequences of the War

The Hormuz shock, inflation targeting, and the prospects of a new cycle of global monetary tightening

Sanctions

The Sanctions Age

On Agathe Demarais’s “Backfire: How Sanctions Reshape the World Against US Interests”

Who Benefits From Sanctions?

On “How Sanctions Work: Iran and the Impact of Economic Warfare” by Narges Bajoghli, Vali Nasr, Djavad Salehi-Isfahani, and Ali Vaez

More from the PW Archive

September 24, 2025

Analysis

The Anti-Climate Common Sense

How a new climate common sense was built and how Trump’s assault threatens its unraveling

March 26, 2026

Interviews

February 3, 2022

Analysis

Acute Dollar Dominance

The dollar system, original sin, and sovereign debt since the pandemic.

December 19, 2025

Analysis

May 23, 2025

Analysis

September 18, 2021

Analysis

December 20, 2022

Analysis

May 22, 2020

Interviews

December 12, 2024

Interviews

Political Investments

An interview with Thomas Ferguson on the 2024 US election

November 28, 2025

Analysis

After Boric

Assessing four years of Chile’s state-led development agenda

November 9, 2022

Analysis

A New Non-Alignment

How developing countries are flouting Western sanctions and playing the great powers off each other

June 3, 2023

Reviews

Supply-Side Coalitions

On Brent Cebul’s “Illusions of Progress: Business, Poverty, and Liberalism in the American Century”